Proper bash training - #1 Introduction, practises and shellcheck

Updated (DD-MM-YYYY): 01/09/2019

They are everywhere. On each OS and continent. They keep a lot of amount servers and infrastructures around the world. Usually, they are hidden from the “civilians”, but still deadly important. There are simple ones, called temporary (common scenario). But there are very advanced examples as well. Sometimes they replace normal software as it’s much simpler, but sometimes they only act as a glue. Sometimes removing only 1 tiny piece of such “unimportant” element is practically impossible, as the rest part of project/infra would fall apart.

If in the future, there will be somekind of AI, which we are scared today, these have the biggest chances to be on the first line in this AI army. About what I’m talking now you may ask? About bash scripts. This subject is always up to date, for admins, devopses, programmers. Over the years, there were introduced many bad habits, practises, approaches and this is the moment to challenge them now. Now we will try to learn how to write good bash scripts today, according to todays standards. We have to, as these inconspicious scripts still are keeping everything working as expected.

Bash scripts are quite interesting topic as most new linux admins, when they only discover this area, they are so excited that they can automate every single command as they completely don’t think about some organisation or practises. They just write scripts in ad-hoc manner and later, problems with maintain will arise if we won’t keep an eye on this approach.

- Movie

- Script (flow diagram)

- Bad script example

- Shellcheck

- Good script example

- Better good example

- Summary

- Links

Movie

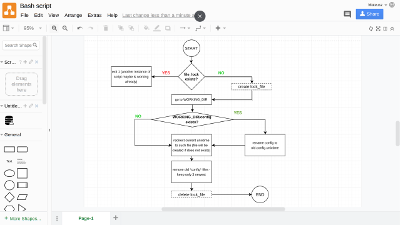

Script (flow diagram)

Today I will show you a good and bad example of a script and approach and tool, which should be your new the mandatory tool, which you should use while writing your bash scripts. Before we start, let’s have a look on this flow chart about this example script which will be used here.

This is a very simple example. So, we start our script and instantly script checks if the file_lock file is present. This technique, this file locking mechanism, is used to prevent multiple instances of the same script working on same data. Don’t ask me why run multiple instances of the same script at the same time mostly, but let’s assume this is the case. So if our script detects a temporary file which acts as a locking mechanism, then it immediately returns with exit code different than 0, 1 in this case (for instance, this does not matter now).

If file lock does not exist then script creates this file and from that point this current instance of the script can exclusively work on it’s duties. In the next step, the script goes into WORKING_DIR directory as in this directory we will doing something with files. When script enters to this dire, it checks if WORKING_DIR/config file exists, if yes, then it renames this file to old.config.unixtimenum pattern. If this file, I mean WORKING_DIR/config does not exist, it will be simply created by using redirection with the newest unixtime taken from the script. After this, script tries to keep only the last 3 newest such files (config and old.config.unixtimenum) - rest will be removed to prevent from creating of too many such files, and possibly in the future there will be no free space because of that.

And in the last step, the file lock is ofcourse removed and our script finishes it’s job. This example is very basic and perfect to show you how important good practises are and how important is to use good tool while writing bash scripts.

Before we go further, we will install shellcheck program which is some kind of linter for bash, written in Haskell. This tool will help us a lot with writing good scripts. Most popular distributions should have this program in their repositories - there are packages in Ubuntu and CentOS repositories. If you are more ambitious you may go to github page for the code and compile it by yourself.

This brings as to question: do I really need this tool to writing even simple scripts? Usually, we can meet approaches like this script has only couple lines and it's temporary. Well, from my experience 90% of scripts are not temporary and over the years they evolve to houndrets or thousands lines of code. That’s why I think a good approach is always the key, even if our script is very simple.

Bad script example

First, we will go over a bad example. This is the script which you should not try even run as it can remove your files. This is only for presentation purposes but it shows us many bad practises. This is a very simple example in my opinion.

1#!/bin/bash

2

3### This script is for BAD PRACTISES presentation, DO NOT RUN this by your own

4### as it can delete your files!

5

6# Below lines for people who don't care and will try to run it

7echo "Only for presentation purposes, do not run this - can remove your files!"

8exit 255

9

10### BAD PRACTISE: we don't know if $1 is set

11WORKING_DIR=$1

12

13# Checking if lock script exists

14### BAD PRACTISE: hardcoded file - error prone

15if [ -e "/tmp/bad-script-lck-file" ]; then

16 echo "File lock found, another instance is running?"

17 exit 1

18fi

19

20# Create lock file to prevent another script do something while this one is working

21### BAD PRACTISES:

22### - hardcoded lock file

23### - what if touch fails (eg: permission, full disc space)?

24touch /tmp/bad-script-lck-file

25

26# Checking if WORKING_DIR exist

27### BAD PRACTISES:

28### 1) This only checks directory but no rights to access (eg: /root?)

29### 2) Currently we have lock_file created, if this exit, file will be preserved

30if [ ! -d "$WORKING_DIR" ]; then

31 echo "$WORKING_DIR does not exist, leaving."

32 exit 2

33fi

34

35# Go to WORKING_DIR

36### BAD PRACTISE:

37### 1) What if we can't enter to this directory albeit it exists (eg: /root)?

38cd $WORKNG_DIR

39

40# Working with config file

41### BAD PRACTISES:

42### 1) Config file hardcoded - highly error prone

43### 2) mv command not quoted - can be dangerous

44### 3) `` is not recommended to wrap command, we are using $()

45### 4) what if mv command fails?

46### 5) what if redirection to config file fails (eg: full disc space, no permissions)?

47### 6) we don't know if we are in the correct dir - hence config file may be overwritten

48if [ -e "config" ]; then

49 mv config old.config.`date +%s`

50else

51 `date +%s` > config

52fi

53

54# Removing old timestamp file - keeping only last three

55### !!! DANGEROUS !!!

56### BAD PRACTISES:

57### 1) Highly DANGEROUS as we don't know even if we are in the correct directory :-)

58### 2) No error code checking

59### 3) No file glob usage (all files in $PWD will be affected)

60rm -f `ls -t | tail -n +4`

61

62# Finally remove lock file to allow another instances of this script to work

63### BAD PRACTISES:

64### 1) Some cases before ommited hence this will be executed when all script path is executed

65### 2) What if rm command fails

66rm -f /tmp/bad-script-lck-fileI think same comments are quite enough to figure out what’s going on here. There are many errors and bad practises presented here - for instance: hardcoded file lock which is highly error prone. Imagine what would happen if there will be misspelling in file lock in one place? Moreover, we don’t check if we can even enter to the destination dir and later we are working on a dir, which maybe different from this which we would expect.

Shellcheck

The real question here is: do we have a tool which will help us with such script? The answer is YES! I mean shellcheck of course, but not only (I will explain later on good example). If we use shellcheck, then it shows us errors, suggestions, problematic lines as you can see on the outbout below. There is also posibillity to easy integrate shellcheck with popular editors - here in the movie I show vim with syntastic plugin which uses shellcheck to verify bash script in the editor window.

1$ shellcheck bad-script

2

3In bad-script line 38:

4cd $WORKNG_DIR

5^-- SC2164: Use 'cd ... || exit' or 'cd ... || return' in case cd fails.

6 ^-- SC2153: Possible misspelling: WORKNG_DIR may not be assigned, but WORKING_DIR is.

7 ^-- SC2086: Double quote to prevent globbing and word splitting.

8

9

10In bad-script line 49:

11 mv config old.config.`date +%s`

12 ^-- SC2046: Quote this to prevent word splitting.

13 ^-- SC2006: Use $(..) instead of legacy `..`.

14

15

16In bad-script line 51:

17 `date +%s` > config

18 ^-- SC2092: Remove backticks to avoid executing output.

19 ^-- SC2006: Use $(..) instead of legacy `..`.

20

21

22In bad-script line 60:

23rm -f `ls -t | tail -n +4`

24 ^-- SC2046: Quote this to prevent word splitting.

25 ^-- SC2006: Use $(..) instead of legacy `..`.

26 ^-- SC2012: Use find instead of ls to better handle non-alphanumeric filenames.

Good script example

Now it’s time for the good example. In this good example we will use bash set bultin for setting very important options which can help us during maintaining script. These options are also checked/suggested by shellcheck.

We use set -e to “force” error handling if a command exited with different error code than 0.

In such case, we have to handle this error using pipeline operator ||, otherwise the script will

immediately stops.

Another option, set -u cases script error if we try to use undefined (unbound in bash terminology) variable. Neat.

It’s worth to metion that the above options are also verified by shellcheck itself, but if we use shellcheck it’s always recommended to use these options at the same time. Please be

1#!/bin/bash

2

3## In case of any error without pipelining - exit immediately

4set -e

5

6## Exit if there is unbound (unused) variable

7set -u

8

9## Important variables, even with rm command

10working_dir=$1

11lock_file="/tmp/good-script-lock-file"

12cmd_rm="rm -f"

13cmd_mv="mv"

14cmd_find=(find . -maxdepth 1 -type f -iname "*config*" -printf "%p\n")

15prefix="old"

16config_file_name="config"

17how_many_newest_files_keep=3

18

19## Removing lock file - function as this will be needed in many cases

20function remove_lock_file()

21{

22 if [ -e "${lock_file}" ]; then

23 ${cmd_rm} "${lock_file}" || {

24 echo "Cannot remove lock file: ${lock_file}" >&2;

25 exit 4

26 }

27 fi

28}

29

30## Catch Ctrl+C signal and cleanup

31trap remove_lock_file SIGINT

32

33## Checking if lock file exist

34if [ -e "${lock_file}" ]; then

35 echo "File lock found, another instance is running?" >&2;

36 exit 1

37fi

38

39## Create lock file to prevent another script do something while this one is working

40touch "${lock_file}" || {

41 echo "Cannot create lock file - exiting" >&2;

42 exit 2

43}

44

45## Go to working dir

46cd "${working_dir}" || {

47 echo "Cannot enter to working dir." >&2;

48 remove_lock_file

49 exit 3

50}

51

52## Creating config_file_path and time postfix

53config_file_path="${working_dir}/${config_file_name}"

54time_now=$(date +%s)

55

56if [ -e "${config_file_path}" ]; then

57 ${cmd_mv} "${config_file_path}" "${prefix}.${config_file_name}.${time_now}" || {

58 echo "Cannot rename ${config_file_path} as ${prefix}.${config_file_name}.${time_now}" >&2;

59 remove_lock_file

60 exit 5

61 }

62fi

63

64echo "${time_now}" > "${config_file_path}" || {

65 echo "Cannot save unixtime ${time_now} to ${config_file_path}" >&2;

66 remove_lock_file

67 exit 6

68}

69

70## Removing old and keep X last

71how_many=$((how_many_newest_files_keep + 1))

72

73for file in $( "${cmd_find[@]}" | sort -rz | tail -n +${how_many}); do

74 ${cmd_rm} "${file}" || {

75 echo "Cannot remove old file: ${file}" >&2;

76 remove_lock_file

77 exit 7

78 }

79done

80

81## Finishing work

82echo "OK"

83remove_lock_fileAs you can see, this good example script is designed mostly for each unexpected case that can happen during it’s work. This is the approach from software development, it’s more demanding as it requires more code to handle corner cases, however it’s really worth this additional effort. If this script can be maintaned or developed more in the future, then we will embrace this approach sooner or later. Hence, we should use this method of writing bash script as our “default”.

Better “good example”

Allegedly the “proper” example presented above caused some controversions and I’ve got some suggestions for improvements. Thanks for all contributors for their feedback, this is important job - my examples will be better here, and, as a result, maybe some scripts in some real projects.

What has been changed in the new version:

- more portable shebang is used now

printfreplacedecho, see here and here why- locking mechanism has been changed - now

mkdiris used with a proper directory to this purpose (/var/lock). There were two alternatives:mkdirandflock,mkdiris easier and more portable to use whereasflockis more iditiomatic (designed) to thus purposes albeit more complicated in usage. As I’ve found on the Internet, there is no consensus which method is objectively best, especially if we have to consider locking on NFS shares. The best locks probably will involve completely different external solutions - like key-value storages (redis). - I’ve made a small refactor, especially functions - there are more than one - now changing lock mechanism is much easier. These functions also use local variables now as idiomatic (via

localdeclaration)

In my opinion $PATH is not a huge problem here and that’s why I haven’t changed anything regarding this area. If there are problems with command or event there are no some commands - script will fail anyway, hence adding code here to ensure $PATH is really not necessary.

1#!/usr/bin/env bash

2

3## In case of any error without pipelining - exit immediately

4set -e

5

6## Exit if there is unbound (unused) variable

7set -u

8

9## Important variables, even with rm command

10working_dir=$1

11lock_file_or_dir="/var/lock/good-script-lock-dir"

12cmd_locking="mkdir ${lock_file_or_dir}"

13cmd_check_lock="test -d ${lock_file_or_dir}"

14cmd_unlocking="rm -rf ${lock_file_or_dir}"

15cmd_rm="rm -f"

16cmd_mv="mv"

17cmd_find="find"

18cmd_find=(${cmd_find} . -maxdepth 1 -type f -iname "*config*" -printf "%p\n")

19prefix="old"

20config_file_name="config"

21how_many_newest_files_keep=3

22

23## Checks if lock is active

24## 1 for true, means is active

25## 0 for false, means there is no active lock

26function is_already_running()

27{

28 local cmd_check_lock=${1}

29

30 ${cmd_check_lock} || {

31 return 1

32 }

33

34 return 0

35}

36

37## Creating lock

38function create_lock()

39{

40 local cmd_locking=${1}

41

42 ${cmd_locking} || {

43 printf "Cannot create lock\n"

44 exit 2

45 }

46}

47

48## Removing lock

49function remove_lock()

50{

51 local cmd_unlocking="${1}"

52 ${cmd_unlocking} || {

53 printf "Cannot unlock\n"

54 exit 3

55 }

56}

57

58## Catch some signals like Ctrl+C and clean up

59trap 'remove_lock "${cmd_unlocking}"' SIGINT SIGTERM

60

61## Check if another instance is running....

62if is_already_running "${cmd_check_lock}" ; then

63 printf "Cannot acquire lock (another instance is running?) - exiting.\n"

64 exit 1

65fi

66

67## Now create lock

68create_lock "${cmd_locking}"

69

70## Go to working dir

71cd "${working_dir}" || {

72 remove_lock "${cmd_unlocking}"

73 printf "Cannot enter to working dir.\n" >&2 ;

74 exit 4

75}

76

77## Creating config_file_path and time postfix

78config_file_path="${working_dir}/${config_file_name}"

79time_now=$(date +%s)

80

81if [ -e "${config_file_path}" ]; then

82 ## mv command mail fail hence we are checking this here

83 ${cmd_mv} "${config_file_path}" "${prefix}.${config_file_name}.${time_now}" || {

84 printf "Cannot rename %s as %s.%s.%s\n" "${config_file_path}" "${prefix}" "${config_file_name}" "${time_now}" >&2 ;

85 remove_lock "${cmd_unlocking}"

86 exit 5

87 }

88fi

89

90printf "%s" "${time_now}" > "${config_file_path}" || {

91 printf "Cannot save unixtime %s to %s\n" "${time_now}" "${config_file_path}" >&2 ;

92 remove_lock "${cmd_unlocking}"

93 exit 6

94}

95

96## Removing old and keep X last

97how_many=$((how_many_newest_files_keep + 1))

98

99for file in $( "${cmd_find[@]}" | sort -rz | tail -n +${how_many}); do

100 ## mv command may fail hence are are checking this here

101 ${cmd_rm} "${file}" || {

102 printf "Cannot remove old file: %s\n" "${file}" >&2 ;

103 remove_lock "${cmd_unlocking}"

104 exit 7

105 }

106done

107

108sleep 10

109

110## Finishing work

111remove_lock "${cmd_unlocking}"

Summary

As you can see on both examples, writing a good bash script requires some changes in mindset and approach, to more developer-oriented I would say. Using additional tool, like shellcheck is also a very good idea to improve our experience here. Such tool can provide really good suggestion to our code, not only brutal critics as some people might expect.

It may looks like overkill for some small scripts, this kind of approach, however, from my experience I may recommended this method. Yes, we have to write more code here and handle many corner cases, but then we should sleep well.

Links